Scott Singer, Matt Sheehan

{

"authors": [

"Scott Singer"

],

"type": "commentary",

"blog": "Emissary",

"centerAffiliationAll": "dc",

"centers": [

"Carnegie Endowment for International Peace"

],

"englishNewsletterAll": "ctw",

"nonEnglishNewsletterAll": "",

"programAffiliation": "TIA",

"programs": [

"Technology and International Affairs"

],

"regions": [

"China",

"United States"

],

"topics": [

"AI",

"Technology",

"Foreign Policy",

"Security"

]

}

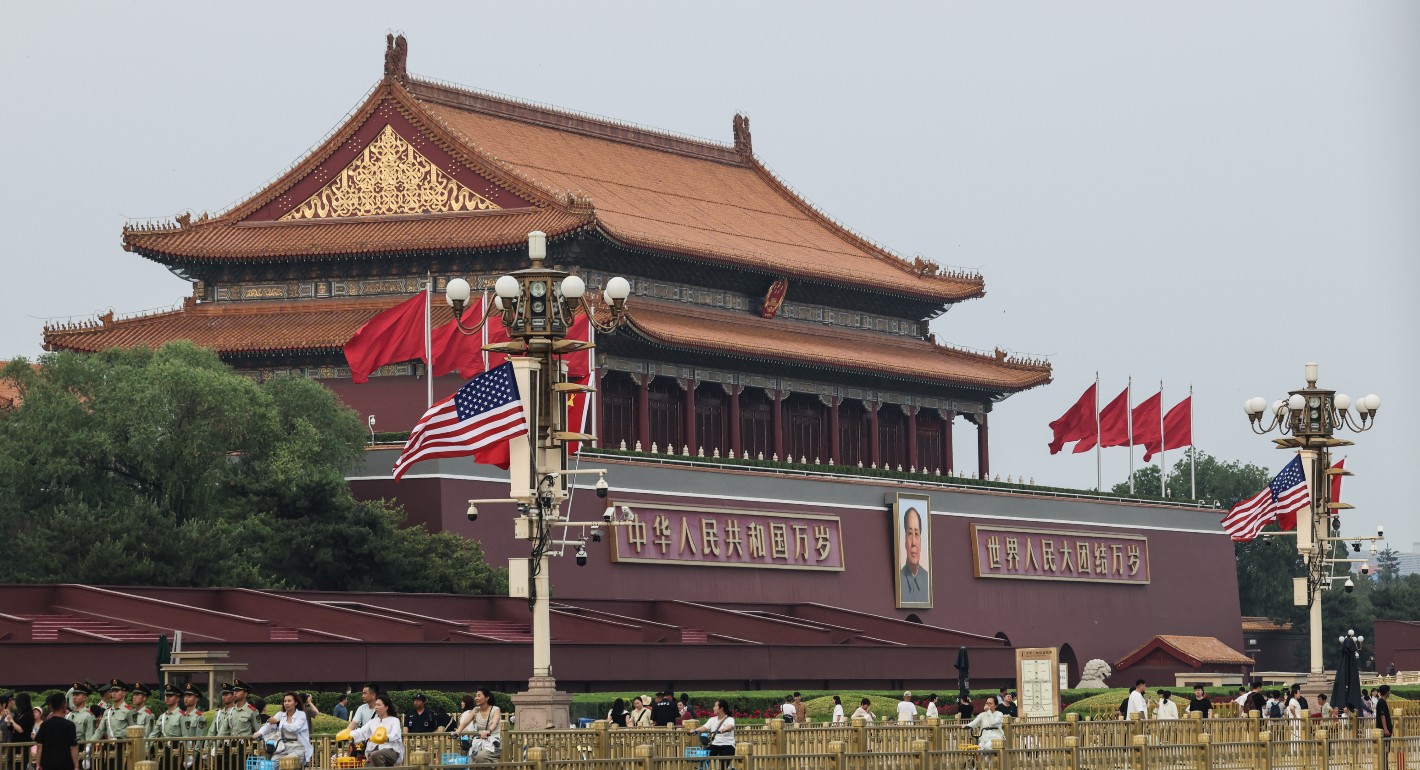

Tiananmen Gate on May 13, 2026. (Photo by Lintao Zhang/Getty Images)

Trump and Xi Should Tackle a Previously Impossible AI Conversation

Previous dialogues ended in failure. This time could be different.

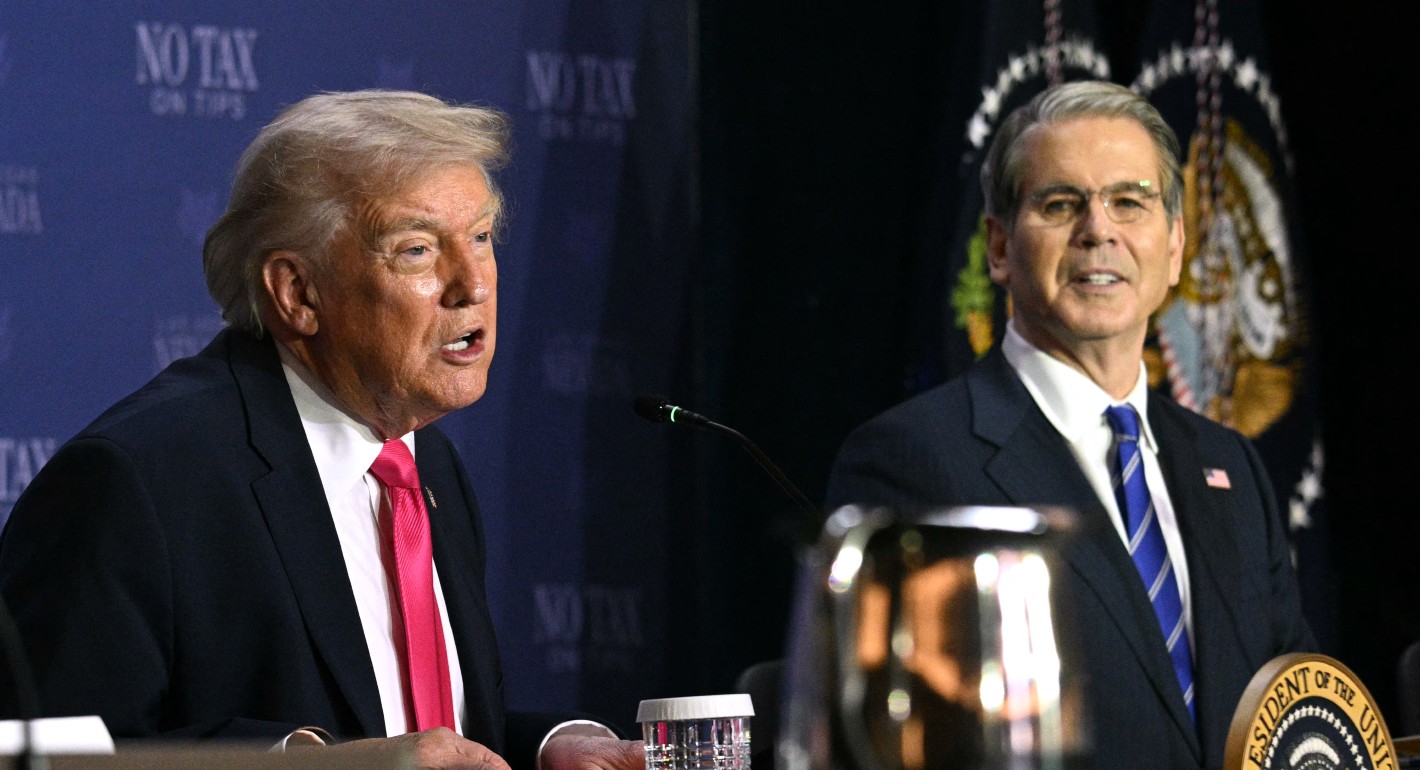

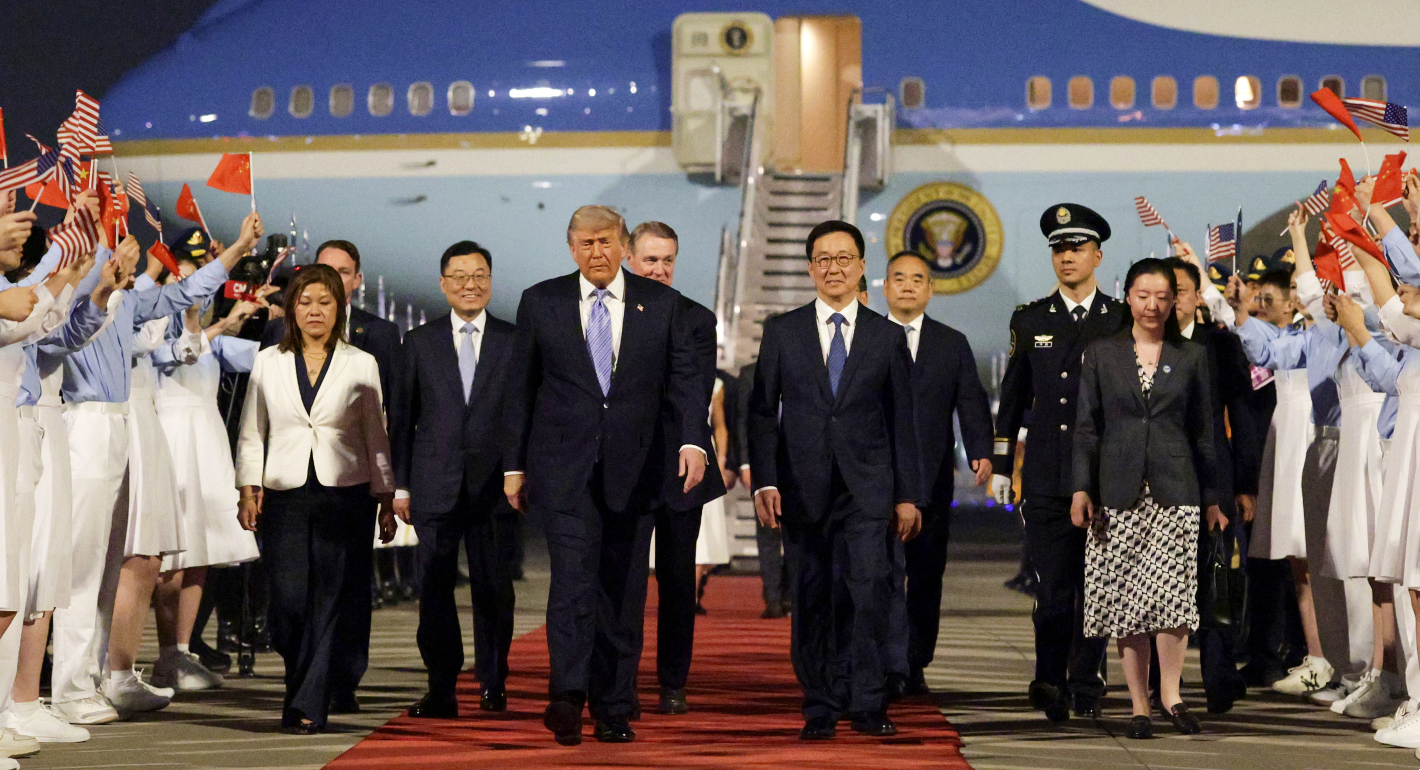

When U.S. President Donald Trump meets Chinese President Xi Jinping in Beijing on Thursday, artificial intelligence will be on the agenda. The administration is right to put it there.

Current frontier models—including Claude Mythos and GPT-5.5—have already uncovered cybersecurity vulnerabilities that experts missed for decades. U.S. Treasury Secretary Scott Bessent has warned that AI could be used to launch attacks on American banks at scale and urged the financial sector to prepare. Soon, Chinese models will likely have comparable capabilities—and pose comparable risks. Whether a model is built in San Francisco or Shenzhen, it can be misused to cause catastrophic harm anywhere.

Official U.S.-China dialogue on AI has garnered appropriate skepticism, including from former U.S. officials. After all, the first U.S.-China dialogue, held in Geneva during President Joe Biden’s administration in 2024, failed to deliver meaningful progress. In that first conversation, the U.S. side brought technical experts for a more granular conversation about the most extreme risks AI could cause, while the Chinese side brought foreign policy experts who were motivated to lift export controls. Put simply, the two sides expected to have different conversations about AI.

Any deal involving “chips for safety”—in which the U.S. eases export controls on advanced AI chips in return for Chinese commitments to stronger AI safety measures—ought to be rejected in 2026, just as it was rejected in 2024. And a grand bargain that leads to a broad détente in U.S.-China AI competition is neither realistic nor desirable.

But a lot has changed since the first round of U.S.-China AI dialogues. China has begun to invest more in AI safety. For example, Shanghai AI Lab has developed its own evaluations for extreme risks with results that Anthropic cofounder Jack Clark said were similar to what his company had found. The recognition that the most extreme risks must be taken seriously is no longer one-sided.

What is needed now is a conversation that was previously impossible: one in which both sides agree that the most extreme risks are real and that managing them is in their shared interest. A narrowly scoped conversation focused on extreme risks would allow both sides to put up guardrails against the misuse cases that neither government can contain alone.

As a starting point, the U.S. and China should prioritize discussing how to test and evaluate models for extreme risks. Just as automobiles undergo crash testing before they are sold to consumers, AI systems need some baseline standards for testing before they are deployed. But tests for AI in both countries are nascent, largely nonstandardized, and technically complex. Models themselves change after they are deployed—both because developers update them and users discover new capabilities—in ways that are unlike cars.

As a result, much more investment and scientific research is needed. Bilateral dialogue can accelerate sharing of best practices in testing. For example, both sides can talk about how to best structure “red teams” designed to intentionally get the model to misbehave to uncover vulnerabilities. That’s one of the most important ways companies locate—and subsequently patch up—problems before models are released widely to the public.

But there should be clear limits to what each side shares. They shouldn’t explain how they try to prompt models to disclose sensitive data. Nor should they share domain-specific knowledge fed into models in dual-use areas like biology that could increase risks.

To keep these conversations from inadvertently revealing intellectual property or national security information, the U.S. government should work closely with frontier AI developers. These companies are the world’s foremost experts on the products they are building and have genuine expertise they can bring to the conversation. Incorporating their technical expertise—while being aware of companies’ own interests—can ensure that any discussion stays grounded in a clear understanding of real risks.

Of course, the U.S. and China will not be able to make AI safe on their own. Some of the most meaningful risk mitigations, such as improving physical security protocols at biological labs, will need to be implemented globally. But this global conversation will require the United States and China to build the foundation for everyone else.

There will never be a good time for U.S.-China AI diplomacy. But 2026 is a much better time than 2024. This week, both sides should start that conversation.

Emissary

The latest from Carnegie scholars on the world’s most pressing challenges, delivered to your inbox.

About the Author

Fellow, Technology and International Affairs

Scott Singer is a fellow in the Technology and International Affairs Program at the Carnegie Endowment for International Peace, where he works on global AI development and governance with a focus on China.

- China Is Worried About AI Companions. Here’s What It’s Doing About Them.Article

- With the RAISE Act, New York Aligns With California on Frontier AI LawsCommentary

Alasdair Phillips-Robins, Scott Singer

Recent Work

Carnegie does not take institutional positions on public policy issues; the views represented herein are those of the author(s) and do not necessarily reflect the views of Carnegie, its staff, or its trustees.

More Work from Emissary

- Trump’s AI Order Won’t Stymie U.S. Competition with ChinaCommentary

Beijing regulated AI—and then Chinese AI companies took off.

Matt Sheehan

- Are Data Centers the Villains in the Battle Over Electricity?Commentary

Examples from Virginia and Lake Tahoe reveal complex situations that governments could use to fund critical grid upgrades.

Kate Gordon, Noah Gordon

- “China Doesn’t Do Anything for Free”Commentary

Why the outcomes of the U.S.-China meetings may be limited.

Aaron David Miller, David Rennie

- The Iran War Is Also Now a Semiconductor ProblemCommentary

The conflict is exposing the deep energy vulnerabilities of Korea’s chip industry.

Darcie Draudt-Véjares, Tim Sahay

- With the RAISE Act, New York Aligns With California on Frontier AI LawsCommentary

The bills differ in minor but meaningful ways, but their overwhelming convergence is key.

Alasdair Phillips-Robins, Scott Singer